It might feel like a headline or title from a science fiction film. The one that would frighten humanity, transporting you to legendary films like Matrix or IRobot, where AI or robots rule the planet (sounds like a cool brainstorming concept out of a game design school classroom). But No, the title aimed to make this short piece thought-provoking and spark a conversation, not to scare you into a panic-shopped for a year’s worth of toilet paper.

Let’s start with a remark from Deloitte on what digital humans are: “Digital Humans are AI-powered human-like virtual creatures who combine AI with a human conversation to provide the best of both worlds. They can readily link to any digital brain to share knowledge (e.g.; Chatbots and NLP) and interact using both verbal and nonverbal indicators such as voice tone and facial expressions.”

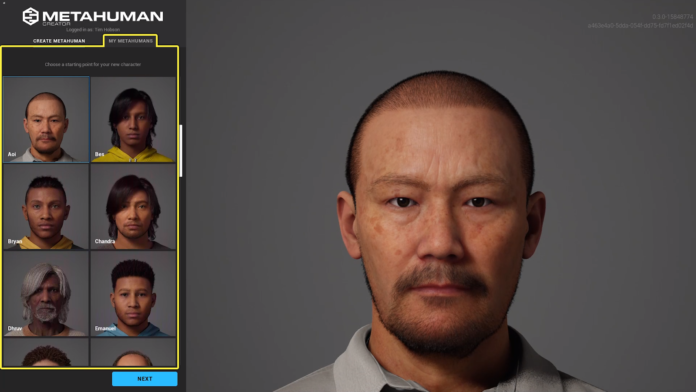

Building entirely a realistic artificial human has been one of the most path-breaking projects in 3D content creation until now. Even the most accomplished artists need sufficient time, work, and equipment to create only one character, stated Vladimir Mastilovic, Epic Games’ VP of Digital Humans Technology. The barrier is now being eliminated through the Unreal Engine. The team is happy to introduce MetaHuman Creator following extensive research and development, and thanks to integrating firms like 3Lateral, Cubic Motion, and Quixel to get into the Epic family. The Unreal Engine is the most efficient software for building these 3D models with basic human figure motions and is widely popular/taking over game programming courses. Early access making reasonably advanced individuals, is now accessible. But, it won’t be available to everyone right soon – you’ll have to register your interest first, and then Epic will grant you access the firm claims it will do so swiftly.

MetaHuman Creator allows users to build new characters using simple workflows to sculpt and shape the results they want. MetaHuman Creator blends between actual cases in the library in a plausible, data-constrained manner when adjustments are being designed. Users can choose a beginning point for their human by selecting several preset faces from the database to contribute to their creation. According to Epic’s technology, it smooths out the planning cycle, making it easier to produce comparable humans. As updates were to be performed, the solution connects certified occurrences in the libraries in a “possible, information-required fashion.” This combination will enable you to produce more believable human models without extensive procedure knowledge. To begin with, look through a few preset appearances stored in Unreal Engine’s database. The company hasn’t said whether the catalog will continue to grow in the future, but considering Epic’s track record, it seems likely. You can choose from 18 distinct body types, a choice of haircuts, and several outfit options on the platform. After you’ve finished creating your human, you can use Quixel Bridge to download it and use it in Unreal Engine for animation and motion capture. You’ll also get the source data in a Maya file, which includes meshes, skeletons, facial rigs, animation controls, and materials for the LODs. MetaHuman Creator assets are compatible with the Live Link Face iOS app. Epic Games claims they’re actively working with ARKit, Faceware, JALI, Speech Graphics, Dynamize, DI4D, Digital Domain, and Cubic Motion for additional support. Animations made for one MetaHuman will play on other MetaHumans, allowing users to reuse a motion-captured performance across several Unreal Engine characters or projects. , working experience i Researchers also investigated the feasibility of displaying an interactive representation of users in a virtual environment (VE) for ergonomic design. There are various approaches to assist these ergonomic designs in the advanced age of computing technology with fully simulated digital human modeling (DHM) and fully interactive virtual reality.

Virtual reality excels at providing immersive, embodied Virtual Environments for creation and evaluation. As a result, it could resemble real-world expansion. With Metaverse technology, the doors are open to building up a more developed and purposeful infrastructure that connects the 3D virtual world and enables a wide range of customers and businesses to be involved in establishing a user experience effectively and efficiently. It is essential to have a creative tool that can keep up with augmented reality (AR), Virtual Reality Tools, and mixed reality (MR) technologies, which are continuously expanding and getting more powerful by the day. Unreal Engine gives you the tools you need to develop a team, assets, and process that can meet your creative vision and quality standards now and in the future. When Artificial Intelligence becomes more strengthened, ; it’s one thing to look human, but it’s another to have emotional responses. Because of the realism shown below, you will film Brand Commercials (TVCs) and even films in the future MetaHumans; in fact, they have made several films with MetaHumans (however, MetaHumans will never play the lead roles.).

The technology is great, yet it falls squarely into the uncanny valley. Epic Games debuted Siren, its Synthetic Human technology, during the Game Developers Conference in San Francisco in 2018. Epic Games had previously used this technology to turn a motion-captured Andy Serkis into an alien fish-man while he was performing Shakespeare’s MacBeth’s “Tomorrow” monologue live.

Conclusion

As a result, “MetaHumans will not take over, but they will be the future.” When real humans cannot take part, they help establish a relationship, answering questions, and providing a more expressive experience than voice or text exchanges. The technology is remarkable, but there will be consequences. It democratizes the development of hyper-realistic digital human faces and drastically reduces the time-take to make some video games and films. It, like all technology developments. The internet is already a market for well-made 3D avatars of celebrities and ex-partners.

Please rephrase

Ok. Done.

though

Thanks

This reads like a statement of fact and making a claim in the future, should be avoided. Can be rephrased to “MetaHumans have the potential to transform every industry”

Ok

Two separate sentences which don’t make sense on their own, should be combined

Done

“could be used” is better than “may be”, as “may be” insinuates that this is being worked upon right now, however MetaHuman Creator’s output is only a 3D model, and doesn’t incorporate conversational AI.

Done.